The model is not the expensive part. For most enterprise AI programmes, integration into legacy infrastructure accounts for 40–60% of total delivery cost — and most business cases never count it.

Every enterprise AI vendor demonstrates their product in a clean environment. The data is structured, the APIs are well-documented, and the system has been built to accept exactly the kind of input their model produces. This is not deception — it is the only rational way to demonstrate a product. But it is profoundly unrepresentative of the environment the product will actually operate in.

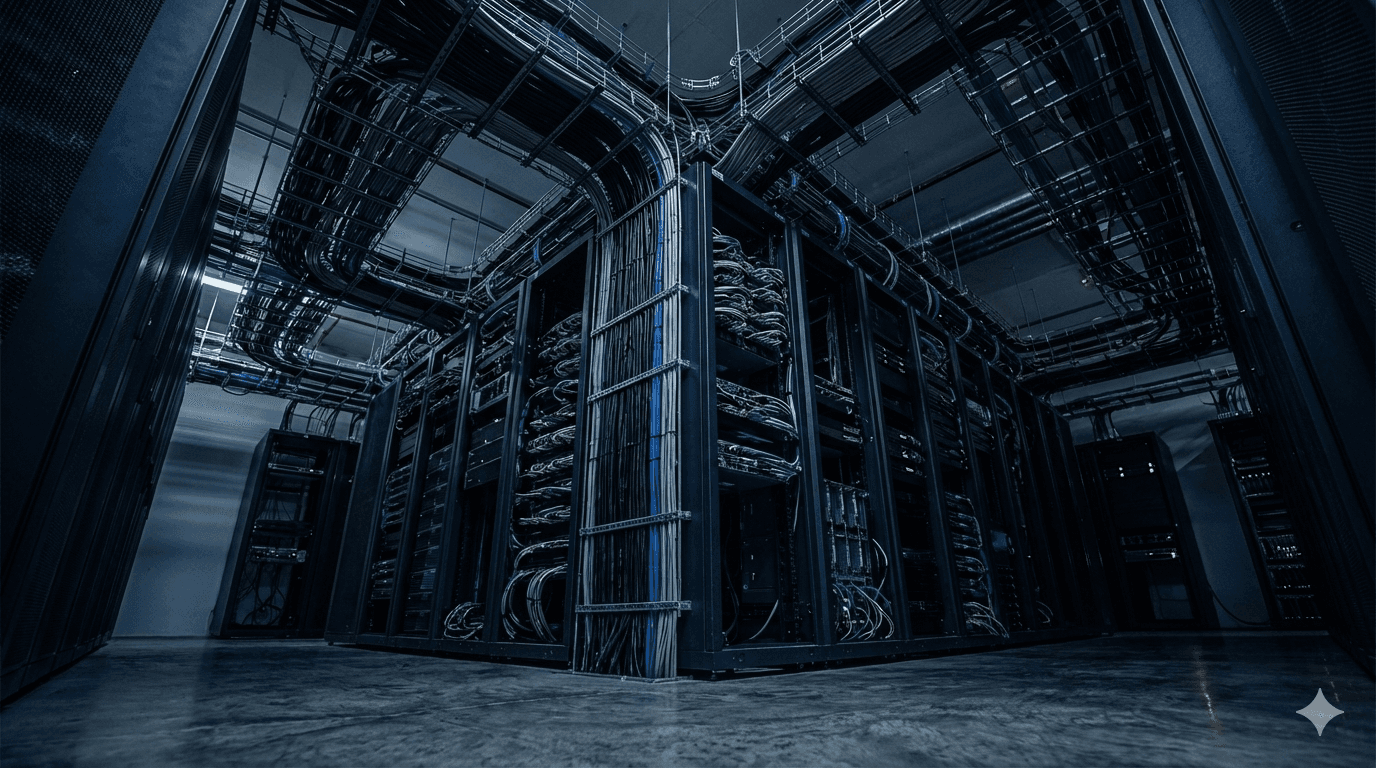

The environment it will actually operate in was built over decades, by different teams, on different stacks, and was never designed to be connected to anything that did not exist at the time it was built. Getting AI to operate reliably in that environment is not a configuration task. It is an engineering programme — and it is consistently the largest cost item that enterprise AI business cases fail to include.

01 — The Model Is the Cheapest Part

The pricing structure of AI vendors creates a systematic distortion in how organisations estimate programme cost. Model inference costs are visible, predictable, and declining. They appear clearly in vendor proposals and are straightforward to project. The engineering work required to connect a model to the systems it depends on does not appear in vendor pricing at all.

Gartner’s analysis of AI programme cost structures in large enterprise deployments suggests that integration and data engineering account for 40–60% of total programme cost once a deployment reaches production scale. That is not a marginal line item. It is the largest single cost component in most programmes — and in most business cases, it is the category most likely to be represented by a placeholder estimate rather than a grounded one.

L.E.K. Consulting’s 2025 Office of the CFO survey found that integration failure was the most commonly cited blocker to AI value realisation, ahead of model performance, workforce adoption, and budget constraints. The technology worked. The surrounding infrastructure did not support it.

02 — Why Legacy Systems Are Systematically Underestimated

The engineers who built most enterprise core systems are no longer at the companies that run them. The documentation for those systems is incomplete, inconsistent, or describes a version of the system that was superseded in 2017. The APIs, where they exist, were built for the integrations that existed at the time — not for the real-time, high-volume, structured data access that AI requires.

This creates a predictable sequence of discoveries during AI integration work. The first discovery is that the data the AI requires exists, but not in the form it requires it. The second is that making it available in the right form requires touching systems that carry disproportionate organisational risk — core ERP, financial ledgers, customer records — where change management timelines are measured in quarters, not sprints. The third discovery is that data quality in those systems, at the field level the AI depends on, is lower than any pre-project audit suggested.

None of this is unusual. It is the normal state of enterprise data infrastructure in organisations that have been operating for more than ten years. What is unusual is treating it as a pre-known cost rather than a discovered one.

03 — The Four Integration Costs That Business Cases Miss

There is a consistent pattern in the integration costs that appear in post-mortems but not in business cases.

Data preparation and cleaning. AI models produce outputs proportional to the quality of their inputs. In most enterprise deployments, preparing data to the quality level the model requires is a programme in its own right — involving source system audits, deduplication, normalisation, and ongoing pipeline maintenance. This work is rarely completed before an AI pilot is funded and is almost always underestimated when it is.

Authentication and access control. Enterprise systems do not share data freely. They have permission structures, audit requirements, and access controls that were not designed with AI service accounts in mind. Connecting AI to these systems requires security architecture work that takes time and, in regulated industries, involves compliance review. It does not appear in any model vendor’s pricing sheet.

Change management for source systems. When AI integration requires modifying how a source system operates — changing an API, adding an event trigger, altering a data structure — that change enters the change management queue for that system. In large organisations, those queues are long and the systems at the front of them are rarely the ones the AI programme needs. Time estimates built on the assumption that source system changes happen on the programme’s schedule are consistently wrong.

Ongoing monitoring and drift management. Once a model is in production, the integration does not become free. Data in source systems changes. Upstream APIs are versioned. Model outputs drift as input distributions shift. Monitoring and maintaining the integration layer is an ongoing engineering cost that most business cases treat as negligible after go-live.

04 — What a Grounded Business Case Looks Like

The organisations that price integration accurately before programmes begin do so by starting with systems architecture, not with vendor pricing.

Before a business case is submitted, the right questions are: which source systems does this AI require access to, in what form, at what latency, and what is the current state of those systems’ data quality and API surface? That analysis produces a real integration scope — and a real cost estimate. It also surfaces, early, the source system constraints that will determine the programme timeline more than any model selection decision will.

This is not a lengthy process. A two-day systems architecture review, conducted before vendor selection, is sufficient to convert an unconstrained business case into one that has a defensible cost structure. The organisations that skip this step do not avoid the cost. They discover it, later, when the programme is already committed and the options for managing it have narrowed considerably.

05 — The Implication for Procurement

The integration tax has a direct implication for how enterprise AI is procured. Selecting a vendor on model performance and licensing cost, without accounting for integration complexity, produces a cost structure that will surprise nobody except the people who approved the budget.

Procurement processes that evaluate AI platforms on implementation risk — specifically, on how cleanly and quickly they connect to the source systems the organisation actually runs — produce better outcomes than processes that evaluate on model benchmarks alone. The model capabilities of the major platforms are converging. The integration surface they offer, and the professional services ecosystem that supports it, diverge considerably.

The organisations extracting the most value from enterprise AI are not the ones with the highest-performing models. They are the ones who entered their programmes with an accurate picture of what connecting those models to their business would actually require — and built that requirement into the programme from the start.

The integration tax is not a hidden cost in the sense that it is difficult to find. It is a hidden cost in the sense that nobody in the procurement process is incentivised to surface it early. Vendors quote model costs. Systems integrators quote implementation costs against scope that has not yet been defined. Internal teams estimate against the optimistic case because that is what gets the programme funded.

The result is programmes that are structurally underfunded for production and structurally overcommitted on timeline. Getting the business case right does not require new tools or new methodology. It requires asking the infrastructure questions before the vendor questions — and treating the answers as constraints rather than footnotes.